- Blog

- Instagram download private

- The warriors game pc download free

- Mario kart wii custom characters black mage

- Greek interlinear bible app koine

- Harlem shake poop edition bestgore

- Jre download windows 10

- Mortal kombat snes blood hack rom

- Python download all images from url

- Gta sa pc download mediafire

- Gta romania 2 download torent

- Greek interlinear bible study

- Create fake bank statement uk

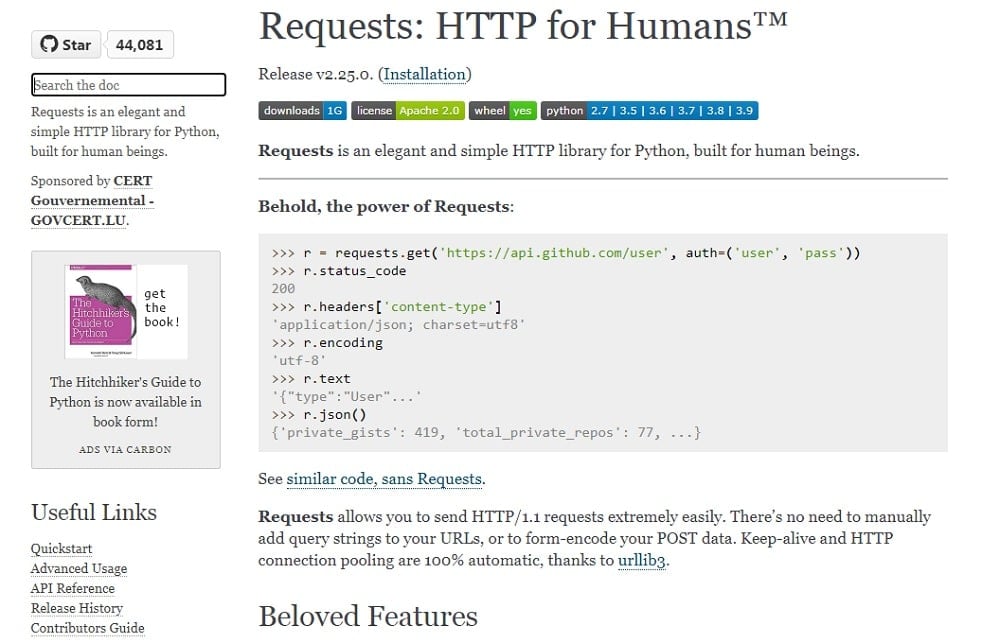

# if path doesn't exist, make that path dir Now that we have a function that grabs all image URLs, we need a function to download files from the web with Python, I brought the following function from this tutorial: def download(url, pathname):ĭownloads a file given an URL and puts it in the folder `pathname`

Now let's make sure that every URL is valid and returns all the image URLs: # finally, if the url is valid We're getting the position of '?' character, then removing everything after it, if there isn't any, it will raise ValueError, that's why I wrapped it in try/except block (of course you can implement it in a better way, if so, please share with us in the comments below). There are some URLs that contains HTTP GET key-value pairs that we don't like (that ends with something like this "/image.png?c=3.2.5"), let's remove them: try: Now we need to make sure that the URL is absolute: # make the URL absolute by joining domain with the URL that is just extracted However, there are some tags that do not contain the src attribute, we skip those by using the continue statement above. To grab the URL of an img tag, there is a src attribute. I've wrapped it in a tqdm object just to print a progress bar though. This will retrieve all img elements as a Python list. # if img does not contain src attribute, just skip The HTML content of the web page is in soup object, to extract all img tags in HTML, we need to use soup.find_all("img") method, let's see it in action: urls = įor img in tqdm(soup.find_all("img"), "Extracting images"): Soup = bs(requests.get(url).content, "html.parser") Second, I'm going to write the core function that grabs all image URLs of a web page: def get_all_images(url):

Urlparse() function parses a URL into six components, we just need to see if the netloc (domain name) and scheme (protocol) are there. Open up a new Python file and import necessary modules: import requestsįrom urllib.parse import urljoin, urlparseįirst, let's make a URL validator, that makes sure that the URL passed is a valid one, as there are some websites that put encoded data in the place of a URL, so we need to skip those: def is_valid(url):

#Python download all images from url install#

To get started, we need quite a few dependencies, let's install them: pip3 install requests bs4 tqdm You can buy me a coffee if this project was helpful to you.Have you ever wanted to download all images on a certain web page? In this tutorial, you will learn how you can build a Python scraper that retrieves all images from a web page given its URL and downloads them using requests and BeautifulSoup libraries. You can also test the programm by runnning test.py keyword PyPi Verbose : (optional, default is True) Enable downloaded message. Timeout : (optional, default is 60) timeout for connection in seconds.įilter : (optional, default is "") filter, choose from Output_dir : (optional, default is 'dataset') Name of output dir.Īdult_filter_off : (optional, default is True) Enable of disable adult filteration.įorce_replace : (optional, default is False) Delete folder if present and start a fresh download. Limit : (optional, default is 100) Number of images to download. download ( query_string, limit = 100, output_dir = 'dataset', adult_filter_off = True, force_replace = False, timeout = 60, verbose = True ) Installationįrom bing_image_downloader import downloader downloader. Please do not download or use any image that violates its copyright terms. This program lets you download tons of images from Bing. This package uses async url, which makes it very fast while downloading. Python library to download bulk of images form.